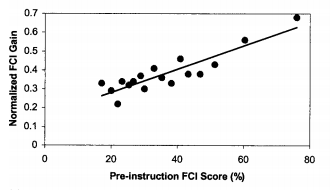

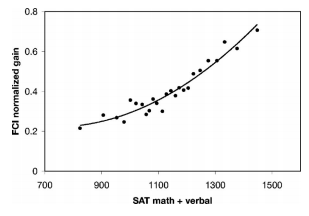

In a previous post, I brought up the issue of disaggregating FCI learning gains. For some perspective, Colleta, Phillips, and Steinhart (2007) have looked at disaggregating FCI learning gains by SAT scores. What they find is that students with higher SAT scores learn disproportionately more than students with low SAT sores. They find correlations around 0.5, and note that the trend is more parabolic than linear. Whether you are more inclined to view SAT scores as a measure of “cognitive ability” (as the authors do) or more inclined to view such scores as a proxy for SES (as I do), I’m becoming increasingly confident that disaggregation is important, and that everyone should be thinking about it.

An emerging site that should especially be thinking about this is the PER user’s guide. At AAPT summer 2013, Adrian Madsen gave a talk about their new initiative to develop a data explorer.

The last line of their abstract asks for feedback. What I can offer here is a conversation.

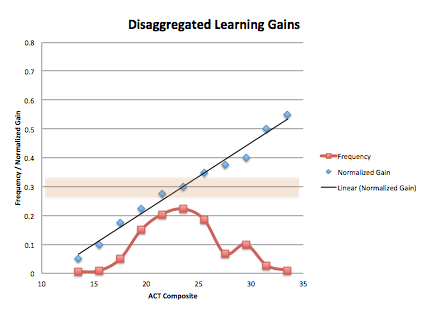

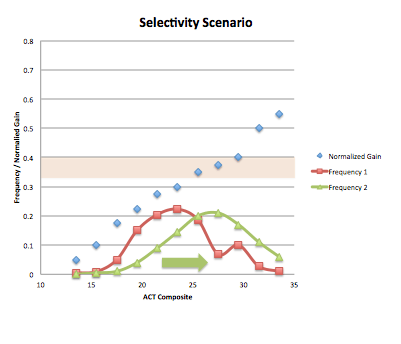

Our institution doesn’t use the SAT much as we are in ACT country. Here is some mock data that somewhat reflects what our institution actually looks like.

This red points on the graph show a broad distribution of students (some students with ACT scores < 15 with majority between 20-25), arising from a fairly low barrier to admittance–it’s something like either a 19 on the ACT or 2.0 GPA. The blue data points show normalized FCI gains broken down by ACT scores, and gives the same overall trend that Colleta, Philips, and Steinhart find with SAT. A colleague of mine who saw this trend said, “The smart get even smarter”. The highlighted horizontal line just indicates what our average normalized gain tends to be, which is typically what is typical yo report.

What I’d like to do now is walk through some different scenarios where higher FCI gains are achieved in different ways, to further the conversation about why disaggregation matters.

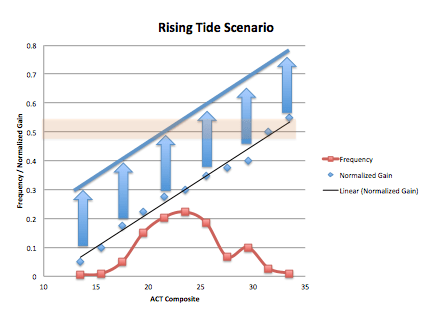

Scenario #1–Improved Learning for Everyone

In this scenario, a course has higher FCI scores, because instruction leads everyone to do better. Students on the high end receive a bump, but importantly so do students on the low end. In this scenario, the distribution of students remains the same. If we were to make changes like this, we’d be improving learning without addressing the learning gap. You can of course imagine ways in which you improve learning and increase the learning gap, by helping the high end students more than the low end.

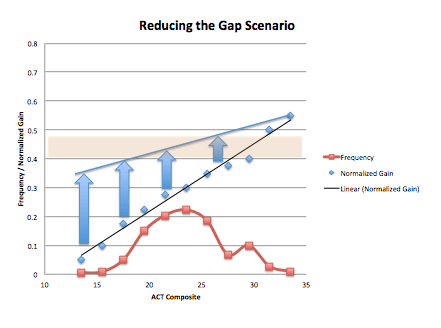

Scenario #2 Reducing the Learning Gap

In this scenario, learning gains are better because students with low ACT scores are learning more with out necessarily helping students on the high end. In this case, learning gains improve and the learning gaps decreases.

Scenario #3 Become More Selective

In this scenario, higher learning gains are achieved due to a shift in the distribution of students. This might happen at more prestigious institutions, or it might be achieved by having more stringent requirements for enrolling in introductory physics. Or, this could be achieved by designing a course to pressure struggling students into withdrawing, so they don’t end up on the post test. In this scenario, I’m assuming that the “trend” stays the same, but there could easily be interactions among distributions (red) and learning (blue). For example, a broad distribution may create difficulties with differentiating instruction. Tighter distributions may benefit from having more homogeneous populations.

In this scenario, higher learning gains are achieved due to a shift in the distribution of students. This might happen at more prestigious institutions, or it might be achieved by having more stringent requirements for enrolling in introductory physics. Or, this could be achieved by designing a course to pressure struggling students into withdrawing, so they don’t end up on the post test. In this scenario, I’m assuming that the “trend” stays the same, but there could easily be interactions among distributions (red) and learning (blue). For example, a broad distribution may create difficulties with differentiating instruction. Tighter distributions may benefit from having more homogeneous populations.

Discussion

What I hope I’ve done here is started a conversation that might convince you that NOT disaggregating masks important features of what’s happening in our classroom. This I believe should be important to people whether they are a staunch believer in “cognitive ability” or staunch advocates for “social justice.” or both. First off, not disaggregating makes comparisons among institutions difficult. A average learning gain of 0.7 and a learning gain of 0.4 can’t be compared meaningfully without disaggregation. If both courses have similar distributions of students, then they can be compared. If one has a distribution of students on the right end and the other has a distribution toward the left end, then comparisons need to be done carefully. Second, disaggregation might allow us to better understand how specific institutions have enacted successful reforms (or failed to), and furthermore it might help institutions to assess their situation and make reforms in a more informed way.

Things I’m not saying:

- I’m not saying, “Students with high ACT scores will learn no matter what.” Findings from PER suggest that even successful students fail to meaningful learn even basic physics concepts when instruction relies heavily on lecture. What we are talking about here is courses that do enact reformed instruction.

- I’m not saying, “You should be satisfied with marginal learning gains if you are at an institution with underprepared students”.

- I’m not saying, “If you have high learning gains, it must be because you teach at a selective school.”

Things I’m wondering about:

- How should we disaggregate? Based on standardized tests? Poverty measures? Math or Reasoning? There are all kinds of ways we can and do and could disaggreate? But what standards of reporting can we argue are most important / informative? What obstacles are there to reporting in these way?

- What are the downsides of disaggregation? I assume there are, and we should think and talk about them.

- Scenario one is really interesting to me. Why? There are reasons to suspect education communities alone cannot address the fact that the blue dots trend the way they do. (Perhaps we can lessen the steepness?) The truth is that the correlation between poverty and educational achievement is strong–it is robust across scales (e.g., classrooms, schools, states, countries), and is robust across shift in measures (SAT, PISA, FCI, etc). That said, there is good reasons to believe that some institutions of learning fair better, despite being subject to the same poverty correlations. For example, I’ve seen Michael Marder talk about poverty and education, in which he shows how every state is subject to the poverty trend, but despite that, they find that the poorest students in states like Texas and Massachusetts achieve about the same as the richest students in Alabama and Mississippi. In other words, it appears you can shift the line up and down vertically.

- This isn’t to say that Scenario two isn’t important, or that I don’t care about it. I have argued previously–what is really Steve Kanim’s point–(in my previous post) that more research needs to be done regarding physics learning in courses, institutions, and students with less preparation and opportunity. It could be that students with high ACT and SAT scores learn more in most of our reformed environments exactly because they are based on research on how those students learn, rather than they are learning less because of their under-preparation. In that case one might say, “the smart get smarter, in part because we mostly study how to help the smart get even smarter”