Yesterday, our department received from the MTSU President the first ever, “President’s Award for Exceptional Departmental Initiatives for Student Academic Success”.

The Political View:

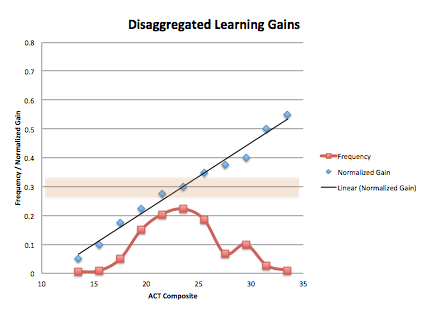

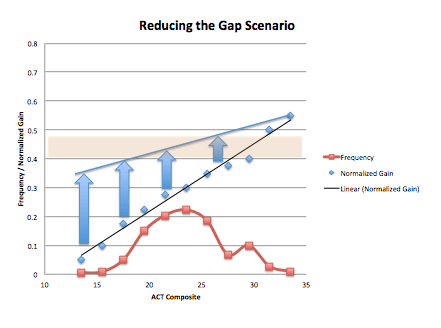

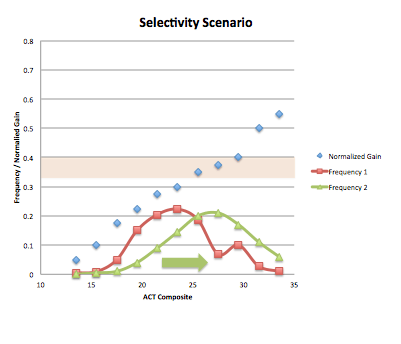

Tennessee State schools used to be funded based on the number of students enrolled. The more students you had, the more state money you got. Historically, MTSU has become the largest undergraduate serving institution in our state, in part, by having admission standards that are fairly low and accepting nearly 100% of students who meet those requirements. I’ve heard that students must either have a GPA of at least 2.0 OR an ACT of 19. For reference, college readiness on the ACT clocks in at 24. At MTSU, the average ACT score of students is around 19, and we mostly draw students from our own state which has the 3rd lowest ACT scores in the country.

As of two years ago, TN state schools are funded not by enrollment but by measures of retention and graduation–a complex formula that takes into consideration how many freshman become sophomores, how many sophomores become juniors, how juniors become seniors, how many seniors graduate, as well as how many students graduate within four years and how many students graduate within six years. Within this landscape, MTSU is looking for ways to increase retention and graduation. And like many other schools around the country, MTSU has (too) many introductory math and science courses with high percentages of students who either receive a grade of D, F, or withdrawal (DFW).

So how do we fit into this? Due to a variety of reforms that we’ve put in place in our introductory physics courses, our DFW rates are far below other math and science courses on campus. In addition, over the past 15 years, we have grown from graduating 1-2 students physics majors per year to 10-12 per year. And our majors excel–achieving very high marks on the Major Fields Test and earning a disproportionate number of Goldwater and Fulbright Awards. Within the current political and financial pressures, our department stands out in both retention and graduation, both in terms of how we compare to other departments and how we have progressed.

The Practical View

All of this has not happened by accident. So what have we actually done? Here is a brief summary.

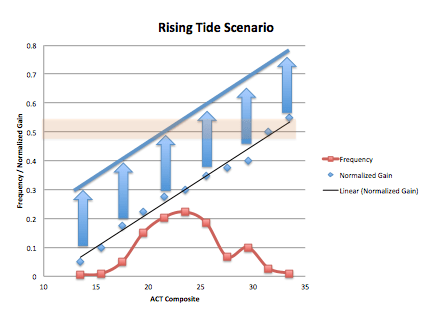

#1 Introductory Course Reform. Both our algebra-based and calculus-based physics course are now run in a studio-setting, which involves a lot collaborative problem-solving and class discussion. The one hour of lecture that exists per week uses interactive engagement methods as well (i.e., clickers). In the calculus-based course, we have adopted a research-based text (Matter & Interactions). In the spring, we hope to pilot a new section of algebra-based physics that uses a research-based curriculum as well. Not only have these reforms reduced DFW rates, but it appears that many of our majors now switch to physics after going through our introductory courses.

# 2 Physics Majors as TAs. All physics majors are required to serve as an undergraduate TA in our introductory physics courses. In the spring, we hope to pilot an actual LA program.

#3 Full-time Instructors. While many colleges hire a revolving cast of part-time instructors, our department hires and keeps full-time instructors. Most of them have been around longer than I have.

#4 Targeted Recruiting. Our department has been sending out MTSU physics brochures to students with high ACT scores and an indicated interest in math or science. In the mailing, students are asked to go online and fill out a brief survey in order to get an MTSU Physics and Astronomy T-shirt. That information allows us to follow up with students and invite them on campus later on.

#5 Career-focused Tracks. One criticism that can often be placed on physics departments is a curriculum that only focuses on preparing graduate students in physics. Our department seems to be constantly tweaking our curriculum to meet the needs of our students, to attract majors, and to prepare students in diverse ways. Among others, we now have concentrations in Teaching Physics and Applied Physics, which are more job oriented.

#6 Changing Prerequisites. We just recently shifted many of our courses prerequisites from students merely having to “pass” the prerequisite courses to now having to get a C or better. Looking at the statistics, we were finding that almost all of the students who received D’s in the prerequisite courses, ended up failing or withdrawing from our classes. Raising the prerequisites will not likely hurt enrollment, because we already turn away students due to over-enrollment.

#7 Free Tutoring Services. Our department pays physics majors to man a tutoring room several days a week. Previously, minority students could receive free tutoring services or students could pay for tutors.

Other Factors:

#1 Leadership Our department chairs deserves a whole lot of the credit here in addition to another faculty member in the department who has committed his career to improving courses, curriculum, and student success. Our efforts are sustained and coherent, rather than fleeting and fragmented.

#2 Data-driven Mindset. Our department has been making changes based on data, not just willy nilly opinions. Analyzing how the DFW rates interact with prerequisites before making changes. Our lower than desired FCI gains is the current impetus to adopt a research-based curriculum and LA program. Data from interviews with graduates led to the most recent Applied Physics concentration. Data about the number of physics teachers we’ve prepared and state need for physics teachers spurred our Physics Teaching Concentration. While any one person might not like some of the changes we make, or perhaps someone might think we need to make more, our department seems to be engaged in a process of continual improvement one that is subject to arguments and outcomes based on evidence. That’s where you want any educational community to be.